12 minutes

Written: 2020-06-07 04:24 +0000

Statistical Rethinking and Nix

This post is part of the R X Nix Nexus: Crafting Cohesive Environments series.

This post describes how to set up a transparent automated setup for reproducible

Rworkflows usingnixpkgs,niv, andlorri. The explanatory example used throughout the post is one of setting up therethinkingpackage and running some examples from the excellent second edition of “Statistical Rethinking” by Richard McElreath.

Background

As detailed in an earlier post1, I had set up Nix to work with non-CRAN packages. If the rest of this section is unclear, please refer back to the earlier post.

Setup

For the remainder of the post, we will set up a basic project structure:

1mkdir tryRnix/

Now we will create a shell.nix as2:

1# shell.nix

2{ pkgs ? import <nixpkgs> { } }:

3with pkgs;

4let

5 my-r-pkgs = rWrapper.override {

6 packages = with rPackages; [

7 ggplot2

8 tidyverse

9 tidybayes

10 tidybayes.rethinking

11 (buildRPackage {

12 name = "rethinking";

13 src = fetchFromGitHub {

14 owner = "rmcelreath";

15 repo = "rethinking";

16 rev = "d0978c7f8b6329b94efa2014658d750ae12b1fa2";

17 sha256 = "1qip6x3f6j9lmcmck6sjrj50a5azqfl6rfhp4fdj7ddabpb8n0z0";

18 };

19 propagatedBuildInputs = [ coda MASS mvtnorm loo shape rstan dagitty ];

20 })

21 ];

22 };

23in mkShell {

24 buildInputs = with pkgs; [ git glibcLocales openssl which openssh curl wget ];

25 inputsFrom = [ my-r-pkgs ];

26 shellHook = ''

27 mkdir -p "$(pwd)/_libs"

28 export R_LIBS_USER="$(pwd)/_libs"

29 '';

30 GIT_SSL_CAINFO = "${cacert}/etc/ssl/certs/ca-bundle.crt";

31 LOCALE_ARCHIVE = stdenv.lib.optionalString stdenv.isLinux

32 "${glibcLocales}/lib/locale/locale-archive";

33}

So we have:

1tree tryRnix

| tryRnix | |||

|---|---|---|---|

| └── | shell.nix | ||

| 0 | directories, | 1 | file |

Introspection

At this point:

- I was able to install packages (system and

R) arbitrarily - I was able to use project specific folders

- Unlike

npm,pipenv,poetry,condaand friends, my system was not bloated by downloading and setting up the same packages every-time I used them in different projects

However, though this is a major step up from being chained to RStudio and my

system package manager, it is still perhaps not immediately obvious how this

workflow is reproducible. Admittedly, I have defined my packages in a nice

functional manner; but someone else might have a different upstream channel they

are tracking, and thus will have different packages. Indeed the only packages

which I could be sure of were the R packages I built from Github, since those

were tied to a hash. Finally, the setup described for each project is pretty

onerous, and it is not immediately clear how to leverage fantastic tools like

direnv for working through this.

Towards Reproducible Environments

The astute reader will have noticed that I mentioned that the R packages were

reproducible since they were tied to a hash, and might reasonable argue that

the entire Nix ecosystem is about hashing in the first place. Once we realize

that, the rest is relatively simple3.

Niv and Pinning

Niv essentially keeps track of the channel from which all the packages are installed. Setup is pretty minimal.

1cd tryRnix/

2nix-env -i niv

3niv init

At this point, we have:

1tree tryRnix

| tryRnix | |||

|---|---|---|---|

| ├── | nix | ||

| │ | ├── | sources.json | |

| │ | └── | sources.nix | |

| └── | shell.nix | ||

| 1 | directory, | 3 | files |

We will have to update our shell.nix to use the new sources.

1let

2 sources = import ./nix/sources.nix;

3 pkgs = import sources.nixpkgs { };

4 stdenv = pkgs.stdenv;

5 my-r-pkgs = pkgs.rWrapper.override {

6 packages = with pkgs.rPackages; [

7 ggplot2

8 tidyverse

9 tidybayes

10 ];

11 };

12in pkgs.mkShell {

13 buildInputs = with pkgs;[ git glibcLocales openssl which openssh curl wget my-r-pkgs ];

14 shellHook = ''

15 mkdir -p "$(pwd)/_libs"

16 export R_LIBS_USER="$(pwd)/_libs"

17 '';

18 GIT_SSL_CAINFO = "${pkgs.cacert}/etc/ssl/certs/ca-bundle.crt";

19 LOCALE_ARCHIVE = stdenv.lib.optionalString stdenv.isLinux

20 "${pkgs.glibcLocales}/lib/locale/locale-archive";

21}

We could inspect and edit these sources by hand, but it is much more convenient

to simply use niv again when we need to update these.

1cd tryRnix/

2niv update nixpkgs -b nixpkgs-unstable

At this stage we have a reproducible set of packages ready to use. However it is

still pretty annoying to have to go through the trouble of writing nix-shell

and also waiting while it rebuilds when we change things.

Lorri and Direnv

In the past, I have made my admiration for direnv very clear (especially for

python-poetry). However, though direnv does allow us to include arbitrary bash logic into our projects, it would be nice to have something which has some defaults for nix. Thankfully, the folks at TweagIO developed lorri to scratch that itch.

The basic setup is simple:

1nix-env -i lorri

2cd tryRnix/

3lorri init

1tree -a tryRnix/

| tryRnix/ | |||

|---|---|---|---|

| ├── | .envrc | ||

| ├── | nix | ||

| │ | ├── | sources.json | |

| │ | └── | sources.nix | |

| └── | shell.nix | ||

| 1 | directory, | 4 | files |

We can and should inspect the environment lorri wants us to load with direnv file:

1cat tryRnix/.envrc

1$(lorri direnv)

In and of itself that is not too descriptive, so we should run that on our own first.

1EVALUATION_ROOT="$HOME/.cache/lorri/gc_roots/407bd4df60fbda6e3a656c39f81c03c2/gc_root/shell_gc_root"

2

3watch_file "/run/user/1000/lorri/daemon.socket"

4watch_file "$EVALUATION_ROOT"

5

6#!/usr/bin/env bash

7# ^ shebang is unused as this file is sourced, but present for editor

8# integration. Note: Direnv guarantees it *will* be parsed using bash.

9

10function punt () {

11 :

12}

13

14# move "origPreHook" "preHook" "$@";;

15move() {

16 srcvarname=$1 # example: varname might contain the string "origPATH"

17 # drop off the source variable name

18 shift

19

20 destvarname=$1 # example: destvarname might contain the string "PATH"

21 # drop off the destination variable name

22 shift

23

24 # like: export origPATH="...some-value..."

25 export "${@?}";

26

27 # set $original to the contents of the variable $srcvarname

28 # refers to

29 eval "$destvarname=\"${!srcvarname}\""

30

31 # mark the destvarname as exported so direnv picks it up

32 # (shellcheck: we do want to export the content of destvarname!)

33 # shellcheck disable=SC2163

34 export "$destvarname"

35

36 # remove the export from above, ie: export origPATH...

37 unset "$srcvarname"

38}

39

40function prepend() {

41 varname=$1 # example: varname might contain the string "PATH"

42

43 # drop off the varname

44 shift

45

46 separator=$1 # example: separator would usually be the string ":"

47

48 # drop off the separator argument, so the remaining arguments

49 # are the arguments to export

50 shift

51

52 # set $original to the contents of the the variable $varname

53 # refers to

54 original="${!varname}"

55

56 # effectfully accept the new variable's contents

57 export "${@?}";

58

59 # re-set $varname's variable to the contents of varname's

60 # reference, plus the current (updated on the export) contents.

61 # however, exclude the ${separator} unless ${original} starts

62 # with a value

63 eval "$varname=${!varname}${original:+${separator}${original}}"

64}

65

66function append() {

67 varname=$1 # example: varname might contain the string "PATH"

68

69 # drop off the varname

70 shift

71

72 separator=$1 # example: separator would usually be the string ":"

73 # drop off the separator argument, so the remaining arguments

74 # are the arguments to export

75 shift

76

77

78 # set $original to the contents of the the variable $varname

79 # refers to

80 original="${!varname:-}"

81

82 # effectfully accept the new variable's contents

83 export "${@?}";

84

85 # re-set $varname's variable to the contents of varname's

86 # reference, plus the current (updated on the export) contents.

87 # however, exclude the ${separator} unless ${original} starts

88 # with a value

89 eval "$varname=${original:+${original}${separator}}${!varname}"

90}

91

92varmap() {

93 if [ -f "$EVALUATION_ROOT/varmap-v1" ]; then

94 # Capture the name of the variable being set

95 IFS="=" read -r -a cur_varname <<< "$1"

96

97 # With IFS='' and the `read` delimiter being '', we achieve

98 # splitting on \0 bytes while also preserving leading

99 # whitespace:

100 #

101 # bash-3.2$ printf ' <- leading space\0bar\0baz\0' \

102 # | (while IFS='' read -d $'\0' -r x; do echo ">$x<"; done)

103 # > <- leading space<

104 # >bar<

105 # >baz<```

106 while IFS='' read -r -d '' map_instruction \

107 && IFS='' read -r -d '' map_variable \

108 && IFS='' read -r -d '' map_separator; do

109 unset IFS

110

111 if [ "$map_variable" == "${cur_varname[0]}" ]; then

112 if [ "$map_instruction" == "append" ]; then

113 append "$map_variable" "$map_separator" "$@"

114 return

115 fi

116 fi

117 done < "$EVALUATION_ROOT/varmap-v1"

118 fi

119

120

121 export "${@?}"

122}

123

124function declare() {

125 if [ "$1" == "-x" ]; then shift; fi

126

127 # Some variables require special handling.

128 #

129 # - punt: don't set the variable at all

130 # - prepend: take the new value, and put it before the current value.

131 case "$1" in

132 # vars from: https://github.com/NixOS/nix/blob/92d08c02c84be34ec0df56ed718526c382845d1a/src/nix-build/nix-build.cc#L100

133 "HOME="*) punt;;

134 "USER="*) punt;;

135 "LOGNAME="*) punt;;

136 "DISPLAY="*) punt;;

137 "PATH="*) prepend "PATH" ":" "$@";;

138 "TERM="*) punt;;

139 "IN_NIX_SHELL="*) punt;;

140 "TZ="*) punt;;

141 "PAGER="*) punt;;

142 "NIX_BUILD_SHELL="*) punt;;

143 "SHLVL="*) punt;;

144

145 # vars from: https://github.com/NixOS/nix/blob/92d08c02c84be34ec0df56ed718526c382845d1a/src/nix-build/nix-build.cc#L385

146 "TEMPDIR="*) punt;;

147 "TMPDIR="*) punt;;

148 "TEMP="*) punt;;

149 "TMP="*) punt;;

150

151 # vars from: https://github.com/NixOS/nix/blob/92d08c02c84be34ec0df56ed718526c382845d1a/src/nix-build/nix-build.cc#L421

152 "NIX_ENFORCE_PURITY="*) punt;;

153

154 # vars from: https://www.gnu.org/software/bash/manual/html_node/Bash-Variables.html (last checked: 2019-09-26)

155 # reported in https://github.com/target/lorri/issues/153

156 "OLDPWD="*) punt;;

157 "PWD="*) punt;;

158 "SHELL="*) punt;;

159

160 # https://github.com/target/lorri/issues/97

161 "preHook="*) punt;;

162 "origPreHook="*) move "origPreHook" "preHook" "$@";;

163

164 *) varmap "$@" ;;

165 esac

166}

167

168export IN_NIX_SHELL=impure

169

170if [ -f "$EVALUATION_ROOT/bash-export" ]; then

171 # shellcheck disable=SC1090

172 . "$EVALUATION_ROOT/bash-export"

173elif [ -f "$EVALUATION_ROOT" ]; then

174 # shellcheck disable=SC1090

175 . "$EVALUATION_ROOT"

176fi

177

178unset declare

179

180Jun 06 19:02:32.368 INFO lorri has not completed an evaluation for this project yet, expr: $HOME/Git/Github/WebDev/Mine/haozeke.github.io/content-org/tryRnix/shell.nix

181Jun 06 19:02:32.368 WARN `lorri direnv` should be executed by direnv from within an `.envrc` file, expr: $HOME/Git/Github/WebDev/Mine/haozeke.github.io/content-org/tryRnix/shell.nix

Upon inspection, that seems to check out. So now we can enable this.

1direnv allow

Additionally, we will need to stick to using a pure environment as much as possible to prevent unexpected situations. So we set:

1# .envrc

2eval "$(lorri direnv)"

3nix-shell --run bash --pure

There’s still a catch though. We need to have lorri daemon running to make

sure the packages are built automatically without us having to exit the shell

and re-run things. We can turn to the documentation for this. Essentially, we

need to have a user-level systemd socket file and service for lorri.

1# ~/.config/systemd/user/lorri.socket

2[Unit]

3Description=Socket for Lorri Daemon

4

5[Socket]

6ListenStream=%t/lorri/daemon.socket

7RuntimeDirectory=lorri

8

9[Install]

10WantedBy=sockets.target

1# ~/.config/systemd/user/lorri.service

2[Unit]

3Description=Lorri Daemon

4Requires=lorri.socket

5After=lorri.socket

6

7[Service]

8ExecStart=%h/.nix-profile/bin/lorri daemon

9PrivateTmp=true

10ProtectSystem=strict

11ProtectHome=read-only

12Restart=on-failure

With that we are finally ready to start working with our auto-managed, reproducible environments.

1systemctl --user daemon-reload && \

2systemctl --user enable --now lorri.socket

Rethinking

As promised, we will first test the setup to see that everything is working. Now

is also a good time to try the tidybayes.rethinking package. In order to use

it, we will need to define the rethinking package in a way so we can pass it

to the buildInputs for tidybayes.rethinking. We will modify new shell.nix

as follows:

1# shell.nix

2let

3 sources = import ./nix/sources.nix;

4 pkgs = import sources.nixpkgs { };

5 stdenv = pkgs.stdenv;

6 rethinking = with pkgs.rPackages;

7 buildRPackage {

8 name = "rethinking";

9 src = pkgs.fetchFromGitHub {

10 owner = "rmcelreath";

11 repo = "rethinking";

12 rev = "d0978c7f8b6329b94efa2014658d750ae12b1fa2";

13 sha256 = "1qip6x3f6j9lmcmck6sjrj50a5azqfl6rfhp4fdj7ddabpb8n0z0";

14 };

15 propagatedBuildInputs = [ coda MASS mvtnorm loo shape rstan dagitty ];

16 };

17 tidybayes_rethinking = with pkgs.rPackages;

18 buildRPackage {

19 name = "tidybayes.rethinking";

20 src = pkgs.fetchFromGitHub {

21 owner = "mjskay";

22 repo = "tidybayes.rethinking";

23 rev = "df903c88f4f4320795a47c616eef24a690b433a4";

24 sha256 = "1jl3189zdddmwm07z1mk58hcahirqrwx211ms0i1rzbx5y4zak0c";

25 };

26 propagatedBuildInputs =

27 [ dplyr tibble rlang MASS tidybayes rethinking rstan ];

28 };

29 rEnv = pkgs.rWrapper.override {

30 packages = with pkgs.rPackages; [

31 ggplot2

32 tidyverse

33 tidybayes

34 devtools

35 modelr

36 cowplot

37 ggrepel

38 RColorBrewer

39 purrr

40 forcats

41 rstan

42 rethinking

43 tidybayes_rethinking

44 ];

45 };

46in pkgs.mkShell {

47 buildInputs = with pkgs; [ git glibcLocales which ];

48 inputsFrom = [ rEnv ];

49 shellHook = ''

50 mkdir -p "$(pwd)/_libs"

51 export R_LIBS_USER="$(pwd)/_libs"

52 '';

53 GIT_SSL_CAINFO = "${pkgs.cacert}/etc/ssl/certs/ca-bundle.crt";

54 LOCALE_ARCHIVE = stdenv.lib.optionalString stdenv.isLinux

55 "${pkgs.glibcLocales}/lib/locale/locale-archive";

56}

The main thing to note here is that we need the output of the derivation we

create here, i.e. we need to use inputsFrom and NOT buildInputs for rEnv.

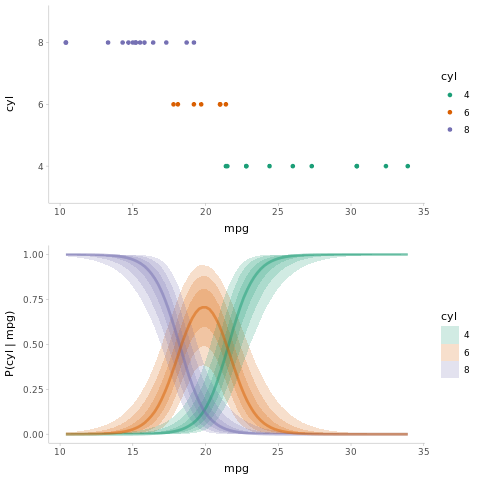

Let us try to get a nice graphic for the conclusion.

1library(magrittr)

2library(dplyr)

3library(purrr)

4library(forcats)

5library(tidyr)

6library(modelr)

7library(tidybayes)

8library(tidybayes.rethinking)

9library(ggplot2)

10library(cowplot)

11library(rstan)

12library(rethinking)

13library(ggrepel)

14library(RColorBrewer)

15

16theme_set(theme_tidybayes())

17rstan_options(auto_write = TRUE)

18options(mc.cores = parallel::detectCores())

19

20

21set.seed(5)

22n = 10

23n_condition = 5

24ABC =

25 tibble(

26 condition = factor(rep(c("A","B","C","D","E"), n)),

27 response = rnorm(n * 5, c(0,1,2,1,-1), 0.5)

28 )

29

30mtcars_clean = mtcars %>%

31 mutate(cyl = factor(cyl))

32

33m_cyl = ulam(alist(

34 cyl ~ dordlogit(phi, cutpoint),

35 phi <- b_mpg*mpg,

36 b_mpg ~ student_t(3, 0, 10),

37 cutpoint ~ student_t(3, 0, 10)

38 ),

39 data = mtcars_clean,

40 chains = 4,

41 cores = parallel::detectCores(),

42 iter = 2000

43)

44

45cutpoints = m_cyl %>%

46 recover_types(mtcars_clean) %>%

47 spread_draws(cutpoint[cyl])

48

49# define the last cutpoint

50last_cutpoint = tibble(

51 .draw = 1:max(cutpoints$.draw),

52 cyl = "8",

53 cutpoint = Inf

54)

55

56cutpoints = bind_rows(cutpoints, last_cutpoint) %>%

57 # define the previous cutpoint (cutpoint_{j-1})

58 group_by(.draw) %>%

59 arrange(cyl) %>%

60 mutate(prev_cutpoint = lag(cutpoint, default = -Inf))

61

62fitted_cyl_probs = mtcars_clean %>%

63 data_grid(mpg = seq_range(mpg, n = 101)) %>%

64 add_fitted_draws(m_cyl) %>%

65 inner_join(cutpoints, by = ".draw") %>%

66 mutate(`P(cyl | mpg)` =

67 # this part is logit^-1(cutpoint_j - beta*x) - logit^-1(cutpoint_{j-1} - beta*x)

68 plogis(cutpoint - .value) - plogis(prev_cutpoint - .value)

69 )

70

71

72data_plot = mtcars_clean %>%

73 ggplot(aes(x = mpg, y = cyl, color = cyl)) +

74 geom_point() +

75 scale_color_brewer(palette = "Dark2", name = "cyl")

76

77fit_plot = fitted_cyl_probs %>%

78 ggplot(aes(x = mpg, y = `P(cyl | mpg)`, color = cyl)) +

79 stat_lineribbon(aes(fill = cyl), alpha = 1/5) +

80 scale_color_brewer(palette = "Dark2") +

81 scale_fill_brewer(palette = "Dark2")

82

83png(filename="../images/rethinking.png")

84plot_grid(ncol = 1, align = "v",

85 data_plot,

86 fit_plot

87)

88dev.off

Finally we will run this in our environment.

1Rscript tesPlot.R

Conclusions

This post was really more of an exploratory follow up to the previous post, and

does not really work in isolation. Then again, at this point everything seems to

have worked out well. R with Nix has finally become a truly viable combination

for any and every analysis under the sun. Some parts of the workflow are still a

bit janky, but will probably resolve themselves over time.

Update: There is a final part detailing automated ways of reloading the system configuration

My motivations were laid out in the aforementioned post, and will not be repeated ↩︎

For why these are the way they are see the this is written, see the aforementioned post ↩︎

Christine Dodrill has a great write up on using these tools as well ↩︎

Series info

R X Nix Nexus: Crafting Cohesive Environments series

- Nix with R and devtools

- Statistical Rethinking and Nix <-- You are here!

- Emacs for Nix-R